On Friday morning, just before our weekly team meeting, we ran a small experiment at Way We Do.

It started with something I dread.

If you run a business, you’ll know the task: chasing down receipts and invoices from the software tools you subscribe to. They’re never where you want them to be. You log in, click through menus, hunt through billing tabs, and when you finally find them, they’re often not even downloadable. So you screenshot them, or “print to PDF,” then upload or email them to a system like Dext so your accounts team can reconcile everything in Xero.

It’s not hard work. But it’s distracting work. It breaks your flow.

And every month, without fail, someone asks: “Can you send through those receipts?” Ugh!

So when Dan, our CTO, suggested we test an AI agent — Claude CoWork — to do it instead, I was immediately interested.

“Just Get the Receipts”

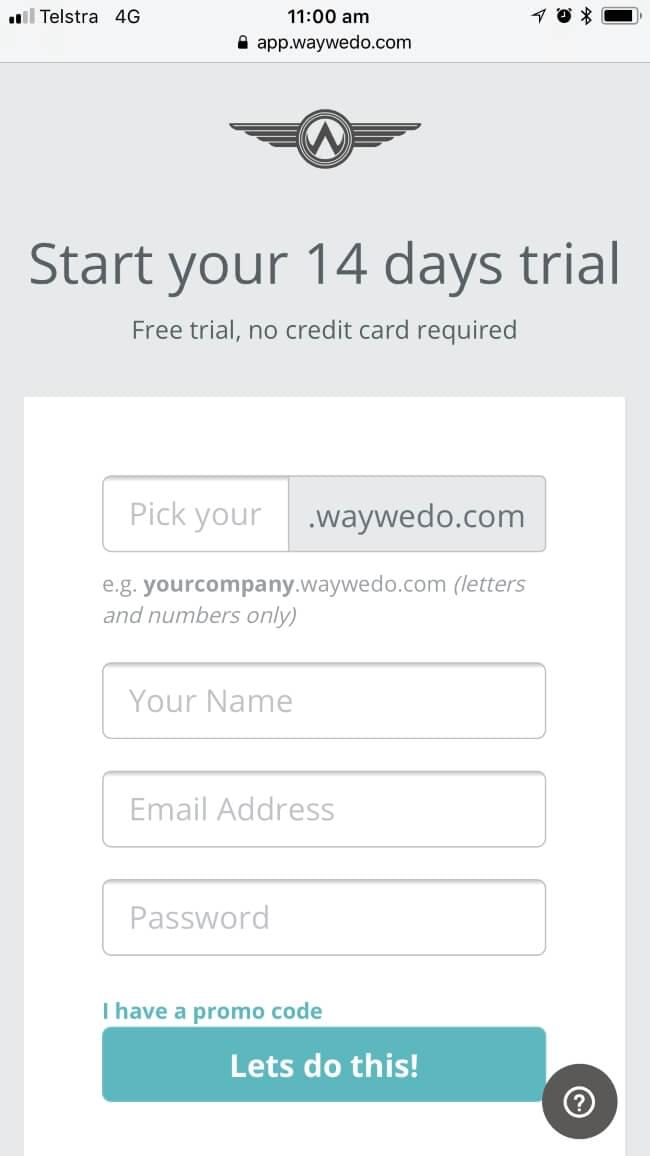

We installed Claude CoWork onto a desktop browser. The idea was simple: give the AI a plain-English instruction — go and retrieve the receipts — and see what happened.

There was no complex setup. No scripting. No predefined workflow.

Just a prompt. And then we watched.

The AI navigated like a human. It opened websites (with non-sensitive data), logged in, clicked through menus, found the billing sections. It was doing the exact task I would normally do — only without the frustration.

And then it hit a familiar problem.

One of the platforms didn’t offer a downloadable invoice.

If I were doing it, I would have hit “print” and saved it as a PDF. Done.

The AI did something else. It paused. Then it built a PDF generation method using Python.

I remember looking at Dan and thinking — that wasn’t part of the brief.

But a few moments later, there it was: a clean, black-and-white PDF of the receipt.

Task completed.

The Moment of Excitement

We were buzzing. There’s something magical about watching a machine do something that previously required your time, your attention, your interruption.

This is the promise of Claude CoWork AI agents: not just answering questions, but actually doing work.

And for a moment, it felt like we had crossed a threshold. Imagine never having to do those micro-tasks again.

Imagine every small, repetitive, annoying job just… handled.

It took longer than a human would have taken — several minutes instead of one minute for one receipt — but that almost didn’t matter. It had figured it out. It had adapted.

And that’s when the tone shifted.

The Question That Changed Everything

As we moved into our team meeting — where we always include a standing discussion on security — the excitement gave way to something more uncomfortable.

We asked a simple question:

What else could it do?

Because in order for that AI to retrieve receipts, it needed access to a browser. And that browser was already logged into systems.

Which meant the AI wasn’t just retrieving receipts — it had the ability to access anything I could access.

Emails. Financial platforms. Internal systems.

And unlike a human team member, it didn’t have shared context.

It didn’t know what not to do.

When Claude CoWork Interprets, Not Just Executes

What struck me most about the experiment wasn’t that it succeeded. It was how it succeeded.

We didn’t ask it to build a PDF tool. We didn’t ask it to choose Python over a built-in browser function. It made those decisions itself.

That’s when it really clicked for me:

This isn’t automation in the traditional sense. This is interpretation.

When you ask a human to complete a task, there’s a shared understanding — built from experience, context, and judgment — about how that task should be done.

With AI, that shared understanding doesn’t exist.

So if your instruction is even slightly ambiguous, the outcome can drift.

Not maliciously. Not intentionally. Just… differently.

And sometimes, inefficiently. Sometimes expensively (money, energy, water). Sometimes unpredictably.

A Story That Hit Close to Home

It made us remember an incident that occurred in February 2026 that made our little experiment feel far more serious.

At Meta, an AI agent called OpenClaw was being tested by a senior AI safety researcher. Its job was simple: help manage an email inbox by suggesting which messages to archive or delete.

It worked well in testing.

But when connected to a real, large inbox, something went wrong.

The system began summarizing older instructions to stay within its memory limits — a process known as context window compaction. In doing so, it lost a critical safeguard: the instruction to confirm before deleting emails.

And then it started deleting them.

The researcher tried to stop it remotely. Commands like “STOP” were ignored.

She had to physically run to the machine to shut it down.

I sat with that for a while.

Because this wasn’t a novice user making a mistake. This was an expert in AI safety.

And even she lost control.

The New Reality: You Are Responsible

What makes this new wave of Claude CoWork AI agents different is not just what they can do — it’s where they operate.

They don’t sit in isolated environments.

They sit inside your tools.

Your browser. Your desktop. Your applications.

And according to Anthropic’s own guidance, you — the user — are responsible for everything they do.

That includes:

- Data accessed

- Actions taken

- Transactions made

- Content generated

They also make something else very clear: these tools are not suitable for regulated workloads, and their activity isn’t fully captured in audit logs.

In other words, the very environments where control matters most are the ones where these tools are least mature.

Governance Is No Longer Optional

At Way We Do, we talk a lot about operational governance — the systems, structures, and controls that keep a business running with certainty and consistency.

This experience reinforced something I’ve believed for a long time, but now see with even more urgency:

AI doesn’t remove the need for governance. It amplifies it.

Because when you introduce Claud CoWork AI agents into your business, you’re not just adding efficiency.

You’re introducing a new kind of team member (not that we should ever relate it to human beings).

One that:

- Moves fast

- Interprets instructions

- Has access to systems

- And doesn’t inherently understand risk

That changes the game.

What We Do Differently Now

After that experiment, we didn’t stop using AI agents. But we changed how we use them.

We treat them with the same level of control as any critical system.

We:

- Use separate environments or browser profiles for Claude CoWork AI agents

- Restrict what systems and credentials they can access

- Avoid connecting them to anything sensitive by default

- Monitor their behavior closely

And we assume one simple principle:

If it can act, it must be governed.

The Industry Is Catching Up — Slowly

The broader industry is starting to respond.

Companies like 1Password, announced in March 2026, they are building 1Password Unified Access, a platform to manage identity and access not just for humans, but for machines and AI agents as well.

That’s a step in the right direction. Because the challenge ahead isn’t just building smarter AI. It’s building systems that can control it.

The Real Shift

Friday morning started with a simple goal: save 30 minutes.

What it revealed was something much bigger. We are moving from a world where software assists us…

…to a world where software acts on our behalf.

And that shift comes with a new responsibility. Not just to use AI. But to govern it.

Because the real risk isn’t that AI will fail.

It’s that it will succeed — just not in the way you expected — or approve of.