Professional services firms — from law and accounting to architecture and consulting — are increasingly turning to artificial intelligence to deliver faster, more efficient advice. But with the rise of these tools comes a new frontier of liability, and with it, a serious challenge for the world of professional indemnity insurance.

Take, for example, a mid-sized legal practice that begins using a generative-AI tool to draft legal arguments. The tool produces an error: a fictitious case citation. A client relying on that work suffers a loss. Who is responsible — the human lawyer, the firm, the AI developer? And is the firm’s professional indemnity insurance geared to answer that question?

This scenario is no longer hypothetical. In recent months, insurers, brokers and risk managers have all raised the alarm about what has been dubbed “Silent AI” — AI-driven risks that fall into grey zones of coverage.

The Promise and the Perils of AI in Professional Services

The benefits of AI in professional services are compelling: faster research, automation of routine tasks, improved data-analysis capabilities. According to one recent review, AI could contribute between 0.1 % and 0.6 % annual productivity growth through 2040, with many professional-services firms already seeing gains.

Yet, the very advantages of AI also expose new risks. For example:

- AI output can be flawed, biased or poorly supervised.

- The question of liability — who is at fault — becomes complicated when AI plays a substantial role.

- Many current professional-indemnity (PI) policies were designed when human professionals, not autonomous AI systems, bore the lion’s share of responsibility.

For insurers specializing in PI cover (sometimes called professional liability insurance) the task is substantial. Traditional PI policies are intended to cover losses arising from negligent acts or omissions by human professionals.

But when AI becomes an active part of the service (whether as a tool or a quasi-advisor), the boundary between human error and machine error becomes blurred.

When AI Hallucinates: The Deloitte Example

In recent weeks, Deloitte Australia agreed to partially refund an AU$440,000 government contract after one of its reports was found to include AI-generated errors — fabricated court quotes and references to non-existent academic papers.

The 237-page report, produced for the Department of Employment and Workplace Relations, was later updated with a disclosure that a generative AI model, Azure OpenAI, had been used in its preparation.

While the incident was resolved quietly, it sparked a larger conversation:

- What happens when an AI system makes a factual or legal error?

- Who is liable — the consultant, the firm, or the technology provider?

- And does professional indemnity insurance (PI) even cover such situations?

This case was a wake-up call for the industry. It showed that AI “hallucinations” aren’t just theoretical — they can occur in real client deliverables, with real financial and reputational consequences.

The “Silent AI” Problem

Many professional-indemnity policies were designed in a pre-AI world, covering losses caused by human error. Now, firms are using AI to draft reports, analyze data, and even generate client recommendations.

Insurers are starting to identify what they call “silent AI” — risks that exist but are neither clearly included nor excluded in policy wording.

For example:

- If AI-generated content leads to a client loss, is it covered?

- Did the professional commit a negligent act, or did the failure lie with the AI tool?

- If the service was partially produced by AI, does it still qualify as a “professional service”?

- Should firms disclose their use of AI tools to their insurer?

Without clear clauses or oversight, professionals could find themselves uninsured for AI-driven mistakes, even when acting in good faith.

The Insurer’s Response: Underwriting, Endorsements and New Products

Insurers are already taking a range of responses:

- They are asking more detailed questions at underwriting about the nature and extent of AI use within the insured firm: What kinds of tools are used? How is human oversight maintained? What governance exists around AI?

- Many insurers are introducing explicit exclusions for certain AI uses (e.g., unsupervised generative AI drafting) or are demanding specific endorsements for AI exposures.

- Some carriers are launching affirmative AI-liability policies — stand-alone or multi-line covers that explicitly cover losses arising from AI system failures or malfunctions. A recent example: a start-up backed Armilla policy underwritten at Lloyd’s of London for losses caused by chatbot errors.

For insureds (the professional services firms), this means the time has come to engage in deeper conversations with brokers and insurers:

- Review current PI policy wordings for AI-related exclusions or ambiguity.

- Develop robust internal controls around AI use: documentation of processes; inputs/outputs; human review; data quality audits.

- Be ready to disclose use of AI to insurers, not necessarily as a “risk” but as a business reality. Transparency may help avoid later coverage disputes.

Why Responsible AI Development Matters

The Deloitte and Lloyd’s of London’s Armilla cases highlight why AI must be built and used with rigorous human oversight and quality assurance. It’s not enough to simply “plug in the AI and away you go.”

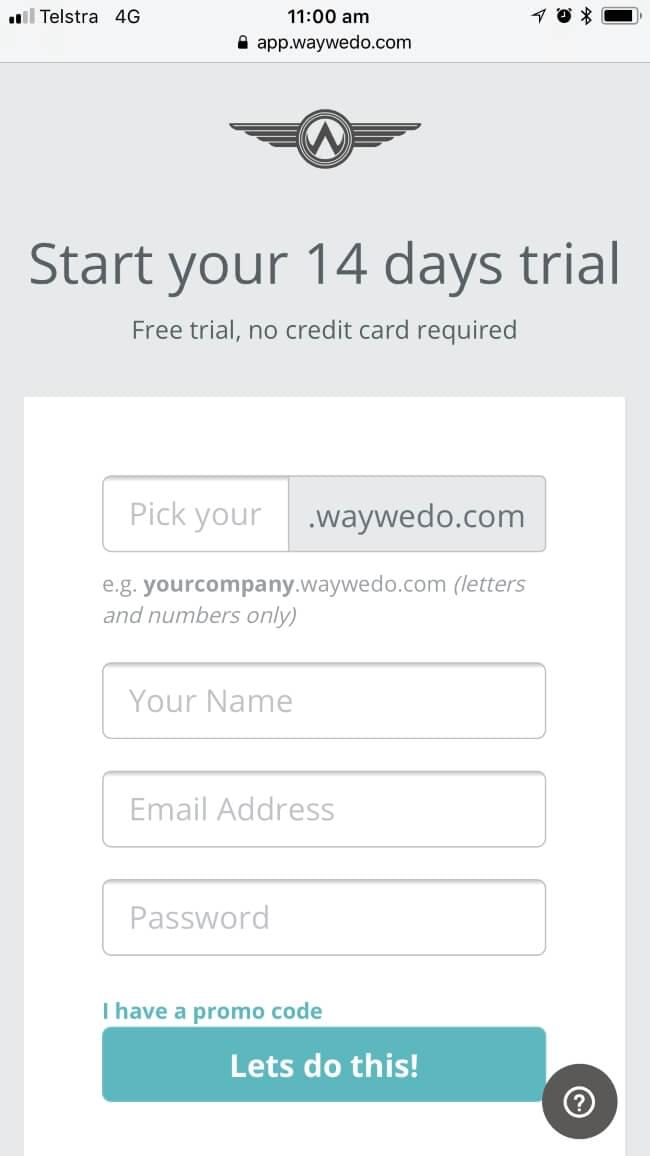

At Way We Do, we’re taking a deliberate and careful approach to AI development as we build our new AI Chat Assistant — a tool that connects people to the knowledge they need, giving teams fast, reliable access to company policies, procedures, and processes through Way We Do or their trusted AI assistant.

Rather than rushing to market, we’re investing in AI evaluation frameworks, known as “evals.”

Here’s how they work:

- We start with real-world questions and answers written by humans — these form the benchmark

- The AI is then asked the same questions

- We score its responses for accuracy, context, tone, and reliability — and compare our score with the AI’s self-rated score.

This process allows us to identify weaknesses and improve performance before releasing features to customers. It’s our way of ensuring the AI provides trustworthy, consistent, and compliant guidance — especially in sensitive, process-driven environments.

“It’s not as simple as just plugging in the AI and away you go. You need structured evaluation loops, human oversight, and a commitment to diligence if you want people to trust the outcomes.”

The Bigger Picture: New Standards of Care, New Liability Norms

One of the most profound shifts under the surface is how the standard of care for professionals may evolve because of AI. As AI becomes more routine, professionals may be expected not only to use it but to manage its risks: for example, verifying AI output, overseeing AI tools, ensuring data integrity. Indeed, a recent survey of legal professionals suggested that many now believe AI will be mainstream within three years in practice settings.

This raises two intertwined pressures:

- Professional liability exposure: If a professional fails to supervise AI appropriately, could that be negligent?

- Insurer underwriting exposure: If firms deploy AI in high-risk ways without full oversight, insurers may face unanticipated claims.

The result may be further tightening of policy wordings, increased premiums for firms using advanced AI without strong governance, and perhaps more frequent disputes about what is (and what is not) covered.

How Professional Indemnity Insurance will change

As we look forward, a few developments are particularly worth watching:

- How regulators around the world regulate AI in professional services — and the implications of those regulations for liability and insurance.

- Whether insurers adopt more affirmative cover for AI-driven professional services (rather than relying on older wordings).

- The degree to which professionals will need to evolve their internal governance around AI (processes, data quality, human oversight, audit trails) in order to preserve their PI cover.

- Whether claims start arising in larger numbers that test policy wordings — as is already happening in law firms.

For professionals, technology offers transformational gains — but with technology comes transformation in risk. The cover you relied on yesterday may not respond in the same way tomorrow.

The Bottom Line: Trust Still Matters

AI has the potential to make professional services smarter and more consistent — but only if it’s built and governed responsibly.

The Deloitte case is a reminder that speed without supervision can quickly turn into liability. At Way We Do, we’re focused on building AI systems that are transparent, auditable, and aligned with real-world business standards.

Because in the end, the goal isn’t just innovation — it’s trust.