AI promised a productivity boom. Instead, some companies are drowning in output.

A report in seconds.

A proposal drafted before the meeting ends.

A summary that sounds sharp, confident, complete.

For executives under pressure to “do more with less,” AI feels like a gift.

But a growing body of research suggests something more complicated is happening inside organizations: AI may be increasing output — while quietly undermining real productivity.

In AI Productivity Blind Spot, Hamilton Mann — Group VP of Digital at Thales and lecturer at INSEAD and HEC Paris — argues that five hidden forces can neutralize or even reverse the gains companies expect from AI deployments.

The problem isn’t that AI isn’t efficient.

It’s that leaders are confusing efficiency with productivity.

The Jevons Paradox of AI: When Better Tools Mean More Work

Mann opens with the Jevons Paradox: when a resource becomes more efficient, overall consumption often increases rather than decreases.

Steam engines became more efficient — coal usage went up.

Fuel economy improved — total driving increased.

AI follows the same pattern.

If content is easy to generate, organizations generate more of it.

If reports take seconds, more reports are created.

If summarizing is effortless, everything gets summarized.

The result? More activity.

But not necessarily more value.

Blind Spot #1: Faster Tasks ≠ Higher Productivity

One of the most common assumptions behind AI adoption is simple: if AI performs a task faster, productivity improves.

Mann argues that this “unit task = unit gain” logic is flawed.

As AI becomes more capable, usage expands — and humans begin to offload thinking. Research cited links AI use with cognitive offloading and reduced critical thinking (Gerlich, 2025).

Studies in high-pressure contexts also show automation bias — people over-trust machine recommendations even when incorrect (Rosbach et al., 2024; Parasuraman & Manzey, 2010).

Over time, AI doesn’t just help people think.

It can change how they think.

And when organizations lose the ability to reason independently, speed gains become fragile.

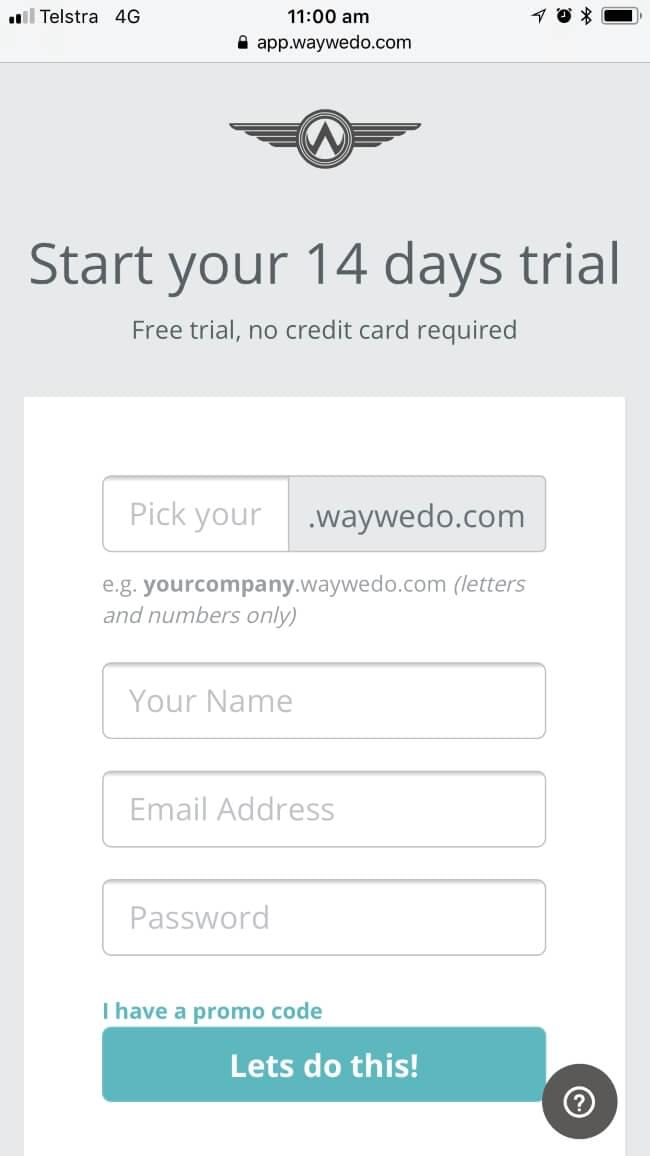

Where Way We Do fits

This is exactly why Human-in-the-Loop design matters.

Way We Do enables organizations to:

- Build mandatory review steps into workflows

- Require rationale at critical decision points

- Define escalation pathways when ambiguity exists

- Track accountability by role, not just by output

Rather than letting AI quietly replace cognitive effort, you design workflows that preserve it where it matters most.

Mann explicitly recommends embedding human-in-the-loop safeguards and tracking decision quality — not just task efficiency.

That’s governance in action.

Blind Spot #2: AI Generates “Pseudo-Work”

Another dangerous assumption: if AI can produce it cheaply, it must be valuable.

Mann describes how AI can create activity without purpose — redundant summaries, unused reports, content that looks productive but has no strategic impact.

Because AI’s marginal cost is near zero, organizations risk flooding themselves with output.

Someone still has to:

- Review it

- Validate it

- Filter it

- Decide what matters

That hidden labor doesn’t show up on dashboards.

The Upwork Research Institute’s 2024 survey illustrates the disconnect:

- 96% of executives expected productivity gains

- 77% of employees said AI increased their workload

- 39% now spend more time reviewing AI outputs

- 71% report burnout

AI didn’t eliminate work. It shifted it.

Where Way We Do fits

Way We Do prevents pseudo-work by enforcing:

- A clear Definition of Done

- Structured quality gates before outputs move downstream

- Approval workflows tied to role accountability

- Documentation standards that filter out “nice to have” from “strategically necessary”

Mann recommends benchmarking AI outputs against defined value criteria and broadening productivity metrics to include hidden validation costs.

With Way We Do, that isn’t theoretical — it’s operational.

Blind Spot #3: Hyper-Efficiency Can Break the System

In real organizations, tasks are interdependent.

If AI allows one team to produce 10 reports in a minute but downstream teams can only absorb one per hour, productivity doesn’t increase. Friction does.

Mann argues that when AI accelerates isolated tasks without modelling the system, it creates workflow desynchronization and overload.

Local efficiency becomes a negative externality.

Where Way We Do fits

Way We Do was designed for system-level process coherence, not isolated automation.

You can:

- Map end-to-end processes

- Assign roles to each step

- Visualise dependencies

- Control pacing through workflow triggers

- Define absorption thresholds before volume scales

Mann recommends modelling AI integration as a networked process and measuring ecosystem-level performance — not just task-level speed.

That’s exactly what structured process management delivers.

Blind Spot #4: AI Isn’t Plug-and-Play

Many companies add AI to legacy systems and expect transformation.

But research on digital transformation shows technology often gets grafted onto existing routines without redesigning underlying processes (Wessel et al., 2021).

In that scenario, AI amplifies old inefficiencies.

Mann recommends conducting a “process deconstruction audit” and redesigning workflows before deployment.

Where Way We Do fits

Way We Do enables:

- Formal process mapping

- Gap analysis against new AI capabilities

- Workflow redesign before automation

- Governance controls embedded from the outset

- Continuous monitoring of systemic performance uplift

AI layered on chaos creates faster chaos.

AI embedded in a governed system creates durable performance.

Blind Spot #5: Productivity Isn’t Just Output

The final blind spot may be the most profound: reducing productivity to output divided by tangible inputs.

That ignores intangible drivers like:

- Judgment

- Emotional intelligence

- Cultural fluency

- Leadership

Meta-analyses show emotional intelligence correlates strongly with job performance (Joseph & Newman, 2010; Doğru, 2022). Research also shows AI hasn’t achieved parity in high-context translation work (Toral et al., 2018). And in experiments with more than 6,000 participants, empathic responses were rated as more supportive when believed to be written by humans — even when the content was identical (Rubin et al., 2025).

AI can simulate empathy. But trust, meaning, and authenticity remain human-attributed.

Where Way We Do fits

Way We Do helps organizations:

- Identify high-intangible-value workflows

- Protect judgment-intensive steps from full automation

- Use AI in augmentation mode (not blind substitution)

- Track compliance and quality without removing human accountability

It’s not about resisting AI.

It’s about designing where AI belongs — and where it doesn’t.

From Artificial Intelligence to Artificial Integrity

Mann concludes that the future isn’t just about smarter AI, but what he calls Artificial Integrity — systems that operate within purpose, context, and responsibility.

AI can optimize execution.

It cannot define direction.

That requires governance.

The Companies That Win

The organizations seeing durable gains from AI are not the ones automating the most.

They are the ones that:

- Define “done” clearly

- Map processes before accelerating them

- Embed human-in-the-loop controls

- Protect judgment-heavy work

- Measure system performance — not just speed

Way We Do sits in that space.

It turns:

- Process into workflow

- Governance into daily practice

- Human oversight into structured design

- AI from a productivity illusion into a managed capability

AI can make work faster.

But without process architecture and governance, faster isn’t better.

The companies that thrive in this next phase won’t just deploy AI.

They’ll design integrity around it.